I competed in HackTech 2025, a hackathon organized and hosted by Caltech for

undergraduates around the world. At the hackathon, I met Ohm Rajpal, Arnab Ghosh, and Vickie Knight, and we collaborated to create

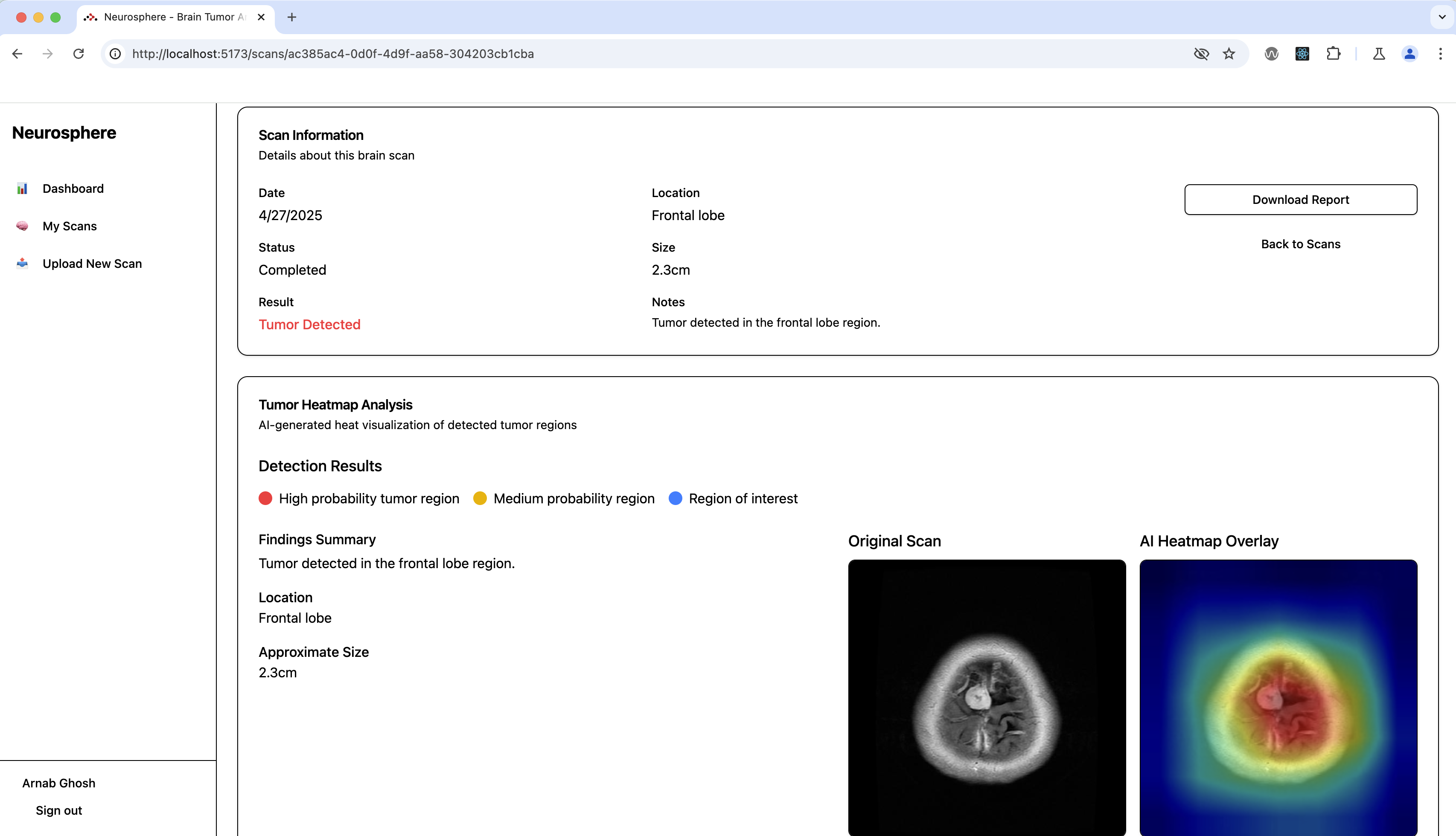

Neurosphere, a web application that locates and classifies brain tumors from

uploaded MRI scans. It also enhances visualization of the brain, enabling doctors to

have a readily accessible map of a tumor's location.

Since I had experience with machine learning, I was responsible for tumor location

and classification. I found a dataset on Kaggle with MRI images of brain tumors with

labels for tumor type. I used PyTorch to load the pre-trained ResNet CNN model and

fine-tune it on the dataset for tumor classification. After achieving 98% test

accuracy, I researched methods to locate the detected tumors for improved

functionality. I discovered the Grad-CAM method, which uses activation outputs in

the neural network to report the most relevant layers. In a CNN, this corresponds to

regions in the image where the relevant features are most likely. This enabled our

app to highlight regions in the MRI scan where the tumor is most likely located. I

implemented this algorithm in our application, representing the output with a

heatmap through Matplotlib, and it made a valuable addition to our app. I also used

the center and spread of the Grad-CAM distribution to determine the brain lobe

containing the tumor and approximate the tumor's size respectively.

My teammates worked on other aspects of the website, including the frontend, backend,

database, and the brain visualizer. We seamlessly integrated the model that I had

trained, and the result was a fully functional app. We submitted our project to the

health track of HackTech 2025, and we won the "Best Use of MongoDB" award. In

addition, we were specially chosen for an interview with representatives from Major

League Hacking to demonstrate our project for social media.